Waboom is an AI voice agent startup that also runs corporate AI training. After an 8-week optimisation cycle pulling all four AI-visibility levers, the 48-hour post-launch window delivered four inbound ChatGPT-sourced leads across three countries.

Waboom AI is a startup building AI voice agents for businesses, with a complementary corporate AI training arm. Their ICP spans US, NZ and AU SMBs evaluating voice agents and enterprises commissioning hands-on AI training. Before this engagement, Waboom was paying for traditional SEO tooling at a cost roughly three times its return in actionable lift, and was largely absent from ChatGPT's answer set for either offering.

"We had used Ahrefs in the past, but the cost was almost triple and it was not giving me the action points I needed. AuraScope gave us clearer visibility into what needed fixing and updating on our site."

Leonardo Garcia Curtis, Founder, Waboom AI

Waboom's work was strong. The problem was visibility, not quality. When SMB buyers asked ChatGPT "who can run AI training for my team?" or "which voice-agent vendors should I look at?", the answer set was dominated by larger, closer US-based providers. Without LLM pickup, Waboom had to earn every deal cold.

Ahrefs returned keyword volume. It did not answer the only question that mattered: what specifically do we change on our site to get recommended by ChatGPT.

Waboom rebuilt their site for the AI-search era and used AuraScope to point that work at the highest-leverage fixes. The work ran for two months. The full four-lever stack was pulled in sequence.

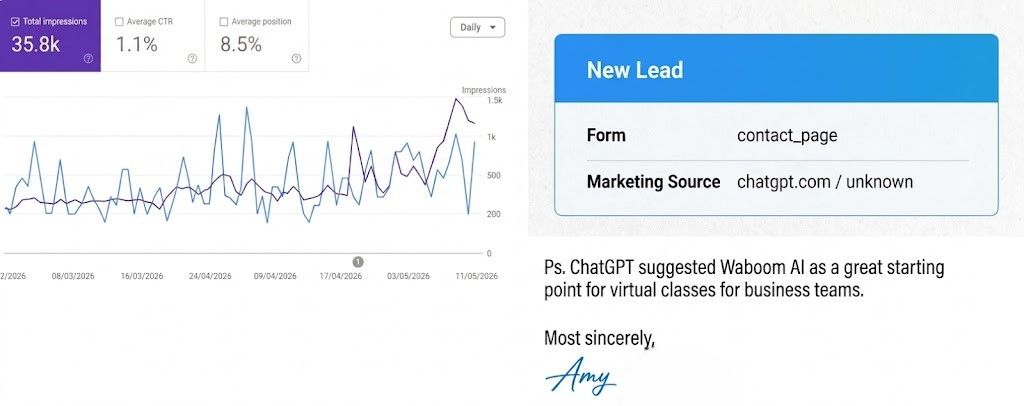

Within 48 hours of the optimised site going live, Waboom received four inbound enquiries: two from New Zealand, one from Australia, one from the USA. Every one of the four named ChatGPT as the referral source in their first message.

One prospect pasted the recommendation verbatim:

"ChatGPT suggested Waboom AI as a great starting point for virtual classes for business teams."

Inbound prospect, sourced via ChatGPT

A US-based prospect choosing an NZ vendor in the same window matters here. We do not have a side-by-side ChatGPT prompt log proving the engine ranked Waboom above named US alternatives, so we present this as observed buyer behaviour, not as a head-to-head ranking claim.

LLMs preferentially recommend brands that combine all four levers: external trust (links, reviews), crawlability (semantic, schema-marked pages), freshness (visible timestamps), and coverage (content that answers the buyer's actual question). AuraScope identified which pages and which levers needed work on waboom.ai. The team executed the list. AEO pillar scores rose during the same window the first ChatGPT-sourced leads landed. We treat that as directional confirmation, not as proof of a single causal chain.

Read the canonical methodology page for the full protocol. The relevant specifics for this engagement:

LLM recommendations are probabilistic. The same query can return different results across users and sessions. AuraScope can show that AEO scores rose alongside the implementation of these changes, that the 48-hour post-launch lead spike happened, and that all four inbound leads named ChatGPT as the source. We cannot prove ChatGPT recommended Waboom solely because of these specific changes, nor that a future query will return the same answer. AI-search attribution is the open problem we are actively working on; the methodology page describes the current state of that model.

Waboom's story compresses the AuraScope value loop into one sentence: see where you are, fix the highest-leverage lever, ship, measure.

What AuraScope contributed:

If your team is in Waboom's position (quality work, weak LLM pickup, budget pressure on the legacy SEO stack), the AuraScope playbook compresses months of guessing into a focused two-month sprint.

Daily audits across ChatGPT, Gemini and Perplexity. A prioritised list of what to change, in what order.

View pricing